Commit

·

7fdbe81

1

Parent(s):

335f67e

Update README.md

Browse files

README.md

CHANGED

|

@@ -18,13 +18,13 @@ widget:

|

|

| 18 |

|

| 19 |

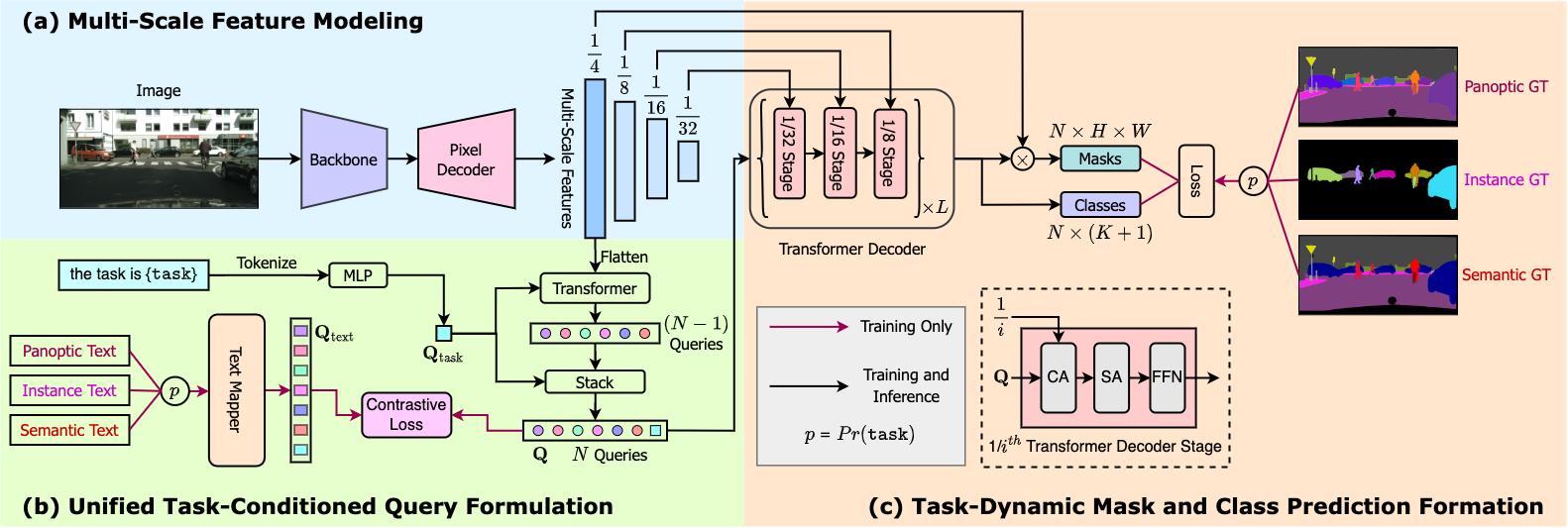

OneFormer model trained on the ADE20k dataset (tiny-sized version, Swin backbone). It was introduced in the paper [OneFormer: One Transformer to Rule Universal Image Segmentation](https://arxiv.org/abs/2211.06220) by Jain et al. and first released in [this repository](https://github.com/SHI-Labs/OneFormer).

|

| 20 |

|

| 21 |

-

|

| 22 |

|

| 23 |

## Model description

|

| 24 |

|

| 25 |

OneFormer is the first multi-task universal image segmentation framework. It needs to be trained only once with a single universal architecture, a single model, and on a single dataset, to outperform existing specialized models across semantic, instance, and panoptic segmentation tasks. OneFormer uses a task token to condition the model on the task in focus, making the architecture task-guided for training, and task-dynamic for inference, all with a single model.

|

| 26 |

|

| 27 |

-

|

| 28 |

|

| 29 |

## Intended uses & limitations

|

| 30 |

|

|

|

|

| 18 |

|

| 19 |

OneFormer model trained on the ADE20k dataset (tiny-sized version, Swin backbone). It was introduced in the paper [OneFormer: One Transformer to Rule Universal Image Segmentation](https://arxiv.org/abs/2211.06220) by Jain et al. and first released in [this repository](https://github.com/SHI-Labs/OneFormer).

|

| 20 |

|

| 21 |

+

|

| 22 |

|

| 23 |

## Model description

|

| 24 |

|

| 25 |

OneFormer is the first multi-task universal image segmentation framework. It needs to be trained only once with a single universal architecture, a single model, and on a single dataset, to outperform existing specialized models across semantic, instance, and panoptic segmentation tasks. OneFormer uses a task token to condition the model on the task in focus, making the architecture task-guided for training, and task-dynamic for inference, all with a single model.

|

| 26 |

|

| 27 |

+

|

| 28 |

|

| 29 |

## Intended uses & limitations

|

| 30 |

|