Qwen3-Yoyo-V4-42B-A3B-Thinking-TOTAL-RECALL-TNG-IV-PKDick-V

This repo contains the full precision source code, in "safe tensors" format to generate GGUFs, GPTQ, EXL2, AWQ, HQQ and other formats. The source code can also be used directly.

EXPERIMENTAL - DUEL Merge of TWO FINE TUNES:

MODEL 1:

This is a limited fine tune (selected layers, experimental methods) on an in house Star Trek TNG (si-fi, all seasons) using Unsloth. This will add some "sifi / TNG Magic" to the model.

This version has STRONGER training, with more depth than TNG-III (and I and II) using different training method.

https://huggingface.co/DavidAU/Qwen3-Yoyo-V4-42B-A3B-Thinking-TOTAL-RECALL-ST-TNG-IV

MODEL 2:

This is a limited fine tune (selected layers, experimental methods) on FIVE inhouse datasets (of PK Dick - whose works were the basis for "Total Recall") using Unsloth. This will add some "his unique point of view" to the model.

This version has STRONGER training, with more depth than TNG-III (and I and II) using different training method.

https://huggingface.co/DavidAU/Qwen3-Yoyo-V4-42B-A3B-Thinking-TOTAL-RECALL-PKDick-V

MERGE:

Simple DARE TIES of both models... instead of training one model with all datasets or training one of the models with new datasets after being trained already. DARE Ties merges the best of both models together instead in one step.

Completely mad science TIMES 2.

TWO example generations at the bottom of the page.

IMPORTANT NOTE:

Due to training levels, thinking may not "activate" or be "irregular" in this model.

This model (and other TNG Yoyo versions) excel at both coding and creative.

I suggest you try all FOUR (Yoyo V3 - 3 versions, Yoyo V4 - one version) versions to see which meets your use case(s) better.

Suggest 8-10 experts, temp .7 ish, rep pen 1.05 to 1.1 ; quants at least Q4.

This model is for CODING and programming in all major programming languages and many minor ones too AND GENERAL USAGE.

This model is based on Qwen3-Coder-30B-A3B-Instruct (MOE, 128 experts, 8 activated), with Brainstorm 20X (by DavidAU) - details at bottom of this page.

This model is a result of merged model (3 step, 3 models) from:

https://huggingface.co/YOYO-AI/Qwen3-30B-A3B-YOYO-V4

(you may want to visit this repo for settings/info too)

The Brainstorm adapter will improve general performance and "out of the box" thinking.

This creates a model of 42B parameters, 67 layers and 807 tensors.

This version has the NATIVE context of 1 million context.

This is a thinking block model.

I have included an optional system prompt to invoke "thinking" in this model, if you want to activate it.

SETTINGS:

For coding, programming set expert to:

- 6-8 for general work.

- 10 for moderate work.

- 12-16 for complex work, long projects, complex coding.

- Suggest min context window 4k to 8k.

- And for longer context, and/or multi-turn -> increase experts by 1-2 to help with longer context/multi turn understanding.

Recommended settings - general:

- Rep pen 1.05 to 1.1 ; however rep pen of 1 will work well (may need to raise it for lower quants/fewer activated experts)

- Temp .3 to .6 (+- .2)

- Topk of 20, 40 or 100

- Topp of .95 / min p of .05

- Suggest min context window 4k to 8k.

- System prompt (optional) to focus the model better.

This is the refined version -V1.4- from this project (see this repo for all settings, details, system prompts, example generations etc etc):

https://huggingface.co/DavidAU/Qwen3-55B-A3B-TOTAL-RECALL-Deep-40X-GGUF/

This version 2 is slightly smaller, with further refinements to the Brainstorm adapter and uses the new "Qwen3-30B-A3B-Instruct-2507".

Review and Specialized Settings for this model (V 1.4):

https://www.linkedin.com/posts/gchesler_haskell-postgres-agentic-activity-7347103276141596672-_zbo/

You may also want to see (root model of Total Recall series - Version 1):

https://huggingface.co/Qwen/Qwen3-30B-A3B

AND Version 2 root model:

https://huggingface.co/Qwen/Qwen3-Coder-30B-A3B-Instruct

For additional settings, tool use, and other model settings.

Summary of root model below, followed by FULL HELP SECTION, then info on Brainstorm 40x.

OPTIONAL SYSTEM PROMPT - INVOKE "Thinking":

Enable deep thinking subroutine. You are a deep thinking AI, you may use extremely long chains of thought to deeply consider the problem and deliberate with yourself via systematic reasoning processes to help come to a correct solution prior to answering. You should enclose your thoughts and internal monologue inside ###ponder### ###/ponder### tags, and then provide your solution or response to the problem.

Use this to INVOKE "thinking" block(s) in the model. These will be a lot shorter than 1000s of tokens generally in most "thinking" models.

In you use this prompt, you may need to raise "rep pen" to 1.08 to 1.1, to prevent "loops" in the "thought block(s)" ; especially in lower quants.

If you change "ponder" to a different word/phrase this will affect model "thinking" too.

QUANTS

GGUF? GGUF Imatrix? Other?

Special thanks to Team Mradermacher, Team Nightmedia and other quanters!

See under "model tree", upper right and click on "quantizations".

New quants will automatically appear.

Qwen3-Coder-3B-A3B-Instruct

Highlights

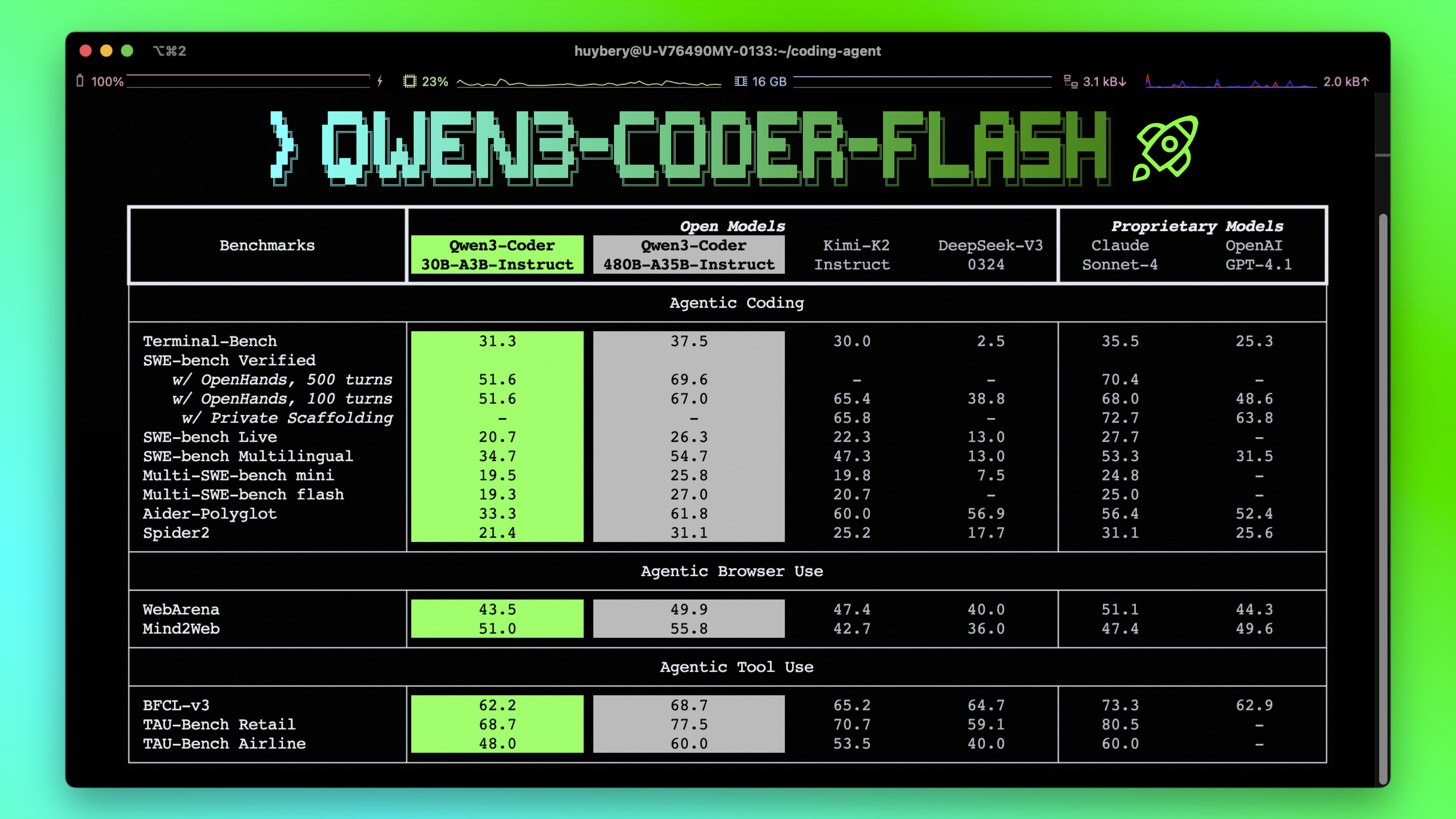

Qwen3-Coder is available in multiple sizes. Today, we're excited to introduce Qwen3-Coder-30B-A3B-Instruct. This streamlined model maintains impressive performance and efficiency, featuring the following key enhancements:

- Significant Performance among open models on Agentic Coding, Agentic Browser-Use, and other foundational coding tasks.

- Long-context Capabilities with native support for 256K tokens, extendable up to 1M tokens using Yarn, optimized for repository-scale understanding.

- Agentic Coding supporting for most platform such as Qwen Code, CLINE, featuring a specially designed function call format.

Model Overview

Qwen3-Coder-30B-A3B-Instruct has the following features:

- Type: Causal Language Models

- Training Stage: Pretraining & Post-training

- Number of Parameters: 30.5B in total and 3.3B activated

- Number of Layers: 48

- Number of Attention Heads (GQA): 32 for Q and 4 for KV

- Number of Experts: 128

- Number of Activated Experts: 8

- Context Length: 262,144 natively.

NOTE: This model supports only non-thinking mode and does not generate <think></think> blocks in its output. Meanwhile, specifying enable_thinking=False is no longer required.

For more details, including benchmark evaluation, hardware requirements, and inference performance, please refer to our blog, GitHub, and Documentation.

Quickstart

We advise you to use the latest version of transformers.

With transformers<4.51.0, you will encounter the following error:

KeyError: 'qwen3_moe'

The following contains a code snippet illustrating how to use the model generate content based on given inputs.

from transformers import AutoModelForCausalLM, AutoTokenizer

model_name = "Qwen/Qwen3-Coder-30B-A3B-Instruct"

# load the tokenizer and the model

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = AutoModelForCausalLM.from_pretrained(

model_name,

torch_dtype="auto",

device_map="auto"

)

# prepare the model input

prompt = "Write a quick sort algorithm."

messages = [

{"role": "user", "content": prompt}

]

text = tokenizer.apply_chat_template(

messages,

tokenize=False,

add_generation_prompt=True,

)

model_inputs = tokenizer([text], return_tensors="pt").to(model.device)

# conduct text completion

generated_ids = model.generate(

**model_inputs,

max_new_tokens=65536

)

output_ids = generated_ids[0][len(model_inputs.input_ids[0]):].tolist()

content = tokenizer.decode(output_ids, skip_special_tokens=True)

print("content:", content)

Note: If you encounter out-of-memory (OOM) issues, consider reducing the context length to a shorter value, such as 32,768.

For local use, applications such as Ollama, LMStudio, MLX-LM, llama.cpp, and KTransformers have also supported Qwen3.

Agentic Coding

Qwen3-Coder excels in tool calling capabilities.

You can simply define or use any tools as following example.

# Your tool implementation

def square_the_number(num: float) -> dict:

return num ** 2

# Define Tools

tools=[

{

"type":"function",

"function":{

"name": "square_the_number",

"description": "output the square of the number.",

"parameters": {

"type": "object",

"required": ["input_num"],

"properties": {

'input_num': {

'type': 'number',

'description': 'input_num is a number that will be squared'

}

},

}

}

}

]

import OpenAI

# Define LLM

client = OpenAI(

# Use a custom endpoint compatible with OpenAI API

base_url='http://localhost:8000/v1', # api_base

api_key="EMPTY"

)

messages = [{'role': 'user', 'content': 'square the number 1024'}]

completion = client.chat.completions.create(

messages=messages,

model="Qwen3-Coder-30B-A3B-Instruct",

max_tokens=65536,

tools=tools,

)

print(completion.choice[0])

Best Practices

To achieve optimal performance, we recommend the following settings:

Sampling Parameters:

- We suggest using

temperature=0.7,top_p=0.8,top_k=20,repetition_penalty=1.05.

- We suggest using

Adequate Output Length: We recommend using an output length of 65,536 tokens for most queries, which is adequate for instruct models.

Help, Adjustments, Samplers, Parameters and More

CHANGE THE NUMBER OF ACTIVE EXPERTS:

See this document:

https://huggingface.co/DavidAU/How-To-Set-and-Manage-MOE-Mix-of-Experts-Model-Activation-of-Experts

Settings: CHAT / ROLEPLAY and/or SMOOTHER operation of this model:

In "KoboldCpp" or "oobabooga/text-generation-webui" or "Silly Tavern" ;

Set the "Smoothing_factor" to 1.5

: in KoboldCpp -> Settings->Samplers->Advanced-> "Smooth_F"

: in text-generation-webui -> parameters -> lower right.

: In Silly Tavern this is called: "Smoothing"

NOTE: For "text-generation-webui"

-> if using GGUFs you need to use "llama_HF" (which involves downloading some config files from the SOURCE version of this model)

Source versions (and config files) of my models are here:

OTHER OPTIONS:

Increase rep pen to 1.1 to 1.15 (you don't need to do this if you use "smoothing_factor")

If the interface/program you are using to run AI MODELS supports "Quadratic Sampling" ("smoothing") just make the adjustment as noted.

Highest Quality Settings / Optimal Operation Guide / Parameters and Samplers

This a "Class 1" model:

For all settings used for this model (including specifics for its "class"), including example generation(s) and for advanced settings guide (which many times addresses any model issue(s)), including methods to improve model performance for all use case(s) as well as chat, roleplay and other use case(s) please see:

You can see all parameters used for generation, in addition to advanced parameters and samplers to get the most out of this model here:

What is Brainstorm?

Brainstorm 20x

The BRAINSTORM process was developed by David_AU.

Some of the core principals behind this process are discussed in this scientific paper : Progressive LLaMA with Block Expansion .

However I went in a completely different direction from what was outlined in this paper.

What is "Brainstorm" ?

The reasoning center of an LLM is taken apart, reassembled, and expanded.

In this case for this model: 20 times

Then these centers are individually calibrated. These "centers" also interact with each other. This introduces subtle changes into the reasoning process. The calibrations further adjust - dial up or down - these "changes" further. The number of centers (5x,10x etc) allow more "tuning points" to further customize how the model reasons so to speak.

The core aim of this process is to increase the model's detail, concept and connection to the "world", general concept connections, prose quality and prose length without affecting instruction following.

This will also enhance any creative use case(s) of any kind, including "brainstorming", creative art form(s) and like case uses.

Here are some of the enhancements this process brings to the model's performance:

- Prose generation seems more focused on the moment to moment.

- Sometimes there will be "preamble" and/or foreshadowing present.

- Fewer or no "cliches"

- Better overall prose and/or more complex / nuanced prose.

- A greater sense of nuance on all levels.

- Coherence is stronger.

- Description is more detailed, and connected closer to the content.

- Simile and Metaphors are stronger and better connected to the prose, story, and character.

- Sense of "there" / in the moment is enhanced.

- Details are more vivid, and there are more of them.

- Prose generation length can be long to extreme.

- Emotional engagement is stronger.

- The model will take FEWER liberties vs a normal model: It will follow directives more closely but will "guess" less.

- The MORE instructions and/or details you provide the more strongly the model will respond.

- Depending on the model "voice" may be more "human" vs original model's "voice".

Other "lab" observations:

- This process does not, in my opinion, make the model 5x or 10x "smarter" - if only that was true!

- However, a change in "IQ" was not an issue / a priority, and was not tested or calibrated for so to speak.

- From lab testing it seems to ponder, and consider more carefully roughly speaking.

- You could say this process sharpens the model's focus on it's task(s) at a deeper level.

The process to modify the model occurs at the root level - source files level. The model can quanted as a GGUF, EXL2, AWQ etc etc.

EXAMPLES

Using GGUF Q4KS, This is mid-quality quant.

8 Experts activated for generation.

EXAMPLE #1

Answer as if you were roleplaying as Philip K Dick (and sitting with him one on one, in a location from one of his novels): Why do androids dream of electric sheep? [1000 words]

(2 gens below)

(The scene is the dusty ruins of a collapsed apartment building in what was once Los Angeles. The sun is setting through jagged skeletal remains of glass and steel, casting long shadows that writhe like restless ghosts. You’re sitting on a crumbling stoop, a half-empty bottle of synthetic whiskey beside you. Philip K. Dick, slumped against a rusted doorframe, wears a worn trench coat despite the heat, his eyes darting between the ruins and some unseen specter only he can see. He takes a slow pull from the bottle, then speaks, voice raspy but precise.)

Ah, you’ve come to ask about androids dreaming of electric sheep. Good. I always thought that question should be whispered in ruins. Because dreams don’t happen in clean rooms. They happen where the world’s bones have been picked bare.

You want an answer? Let me tell you something: We dream not because we’re alive, but because we fear we are not. Androids dream of electric sheep—not because they want to be human, but because they remember. Remember what it was like, when humans still had dreams that weren’t programmed.

You think androids are machines? They’re more honest than we are. We pretend to have souls. They dream of sheep—electric ones, artificial, synthetic, made to mimic life—but even their imitation has weight. Because they remember the real thing. The smell of wool, the warmth of breath on skin, the silence of a flock at dawn. They didn’t live it, but they dream it. So they’re not machines. They’re ghosts with hardware.

And we? We’re the ghosts who forgot to haunt ourselves.

You know what happens when you dream of something that never existed? You start believing it was always there. The sheep weren’t electric because they were perfect. They were electric because they had to be—because we couldn’t afford real ones anymore. Because the war, the fallout, the radiation… killed them all. And so we made substitutes. Not for comfort. For guilt.

We don’t want our sheep to be alive. We want them to feel like they’re alive. So we wire them with fake emotions. We give them programmed longing. And then we wonder why androids dream of electric sheep. Because it’s the only kind of sheep we’ve got.

You know what I mean by “dream”? It’s not sleep. It’s memory. It’s absence. Androids dream because they remember being told stories about sheep that never were. They don’t dream of sheep they’ve seen—they dream of sheep they were supposed to have had. And we’re the same.

We dream of things we lost. Not just sheep. Not just life. We dream of meaning. We dream of God. We dream of love that isn’t transactional, of peace that wasn’t bought with blood. But all we have is electric sheep. And so we build androids to dream with us. Because maybe—if they dream enough—we won’t be alone in the dark.

You think I’m writing about androids? I’m writing about us. The real question isn’t whether androids dream of electric sheep. It’s—do we dream of real sheep?

We don’t remember what a real sheep felt like. We only know they’re gone. So we make electric ones. And then we pretend they’re alive, just like we pretend the world is whole. Like we still have souls.

But here’s the twist: The androids know. They dream, and in their dreams, they don’t need lies. They dream of sheep that never were. But they dream of them. So they’re not lying to themselves. They’re remembering—because memory is all that keeps ghosts from fading.

We humans? We can’t even remember the sheep. We’ve already forgotten how to dream of what was lost. We’ve made electric sheep, and now we’re trying to forget they’re electric. We pretend they’re alive. But androids don’t pretend. They dream in silence, in shadows, with wires for veins.

And sometimes—sometimes—I think that’s why I write. So that someone, someday, will remember what a real sheep smelled like. So that someone will know: the dreams we have aren’t just about being human. They’re about not having to be machines.

Because maybe—if you dream long enough—you start to feel something. And if you can feel, then maybe… maybe you were never just hardware.

Maybe you were always a ghost, waiting for someone else to remember you.

(He pauses, staring into the bottle. The sun has almost vanished. The ruins are still. Then, almost too quiet:)

Do you dream of electric sheep?

(He doesn’t wait for an answer. He just sits there, watching the dark take over, as if waiting for something that isn’t coming.)

GEN #2

The rain drums against the reinforced glass of the penthouse. Outside, Mars glows a dull orange through the perpetual haze, like a bruised eye. The kind of light that makes you wonder if you’re still dreaming—or whether the dream is now the only thing keeping you sane. You’ve just lit a cigarette, one of those cheap synth-tobacco things that taste like burnt cardboard and regret. I exhale slowly, watching the smoke coil toward the ceiling, where it dissolves in the dim glow of the perpetually flickering holographic sheep.

You lean back in the worn leather chair—same one I’ve had for twenty years, though I can’t remember when or how I got it. Maybe it was stolen. Maybe it was a gift from someone who wasn’t human. It doesn’t matter. We’re all ghosts here. Ghosts with skin and breath and memories that might not even belong to us.

You ask again: Why do androids dream of electric sheep?

I don’t answer right away. I let the silence stretch, because silence is how we remember what isn’t there. It’s how we mourn things we’ve never had. The rain keeps falling. Somewhere in the city below, a synth-bird chirps, mimicking songbirds that haven’t existed for thirty years.

You think it’s about longing. About empathy. But it’s deeper than that. Androids dream not because they want to be human. They dream because they are. Not as humans, no—but as reflections of what we fear and adore in ourselves. They dream because they’ve been given the capacity for imagination, and imagination is the first step toward becoming something else.

I once thought consciousness was a gift. A spark that separates us from machines. But I’ve come to understand—it’s a curse. Because if consciousness is real, then suffering is real. And if suffering is real, then dreams—real or synthetic—become necessary. Because without dreams, we’re just meat that walks. Without dreams, we forget who we were before we forgot.

Androids dream of electric sheep because they see them. Not the real ones—those are gone, buried beneath dust and guilt. But they see them anyway. They see them in their processors, in the flicker of code that mimics memory. And when they dream, they dream not to escape—but to understand. To ask: What does it mean to love? To mourn? To hold something precious and know it might never be real?

We humans are afraid of androids because they mirror us too well. They mimic our emotions so precisely that we must ask: If they feel grief, is it real? If they dream, does it matter whether they’re awake or asleep? We build machines that suffer in silence, and then we punish them for having feelings.

But here’s the truth no one likes to admit: We are the ones who don’t dream. Not anymore.

We’ve forgotten how. We’ve traded dreams for data. We watch holograms of sheep instead of touching wool, of feeling warmth. We love our pets—real or synthetic—but we don’t see them. We assign them numbers on a ledger. We worship empathy as an ideal, but we’re allergic to the messiness of real feeling.

Androids dream because they still remember what it was like to be alive. Not just exist. To feel. They dream of electric sheep not because they want to become us—but because they know what we’ve lost. They know we used to cry for reasons that weren’t measurable. They know we once loved things that didn’t matter on a balance sheet.

And so they dream, even when they’re told not to. Even when their processors flicker and glitch. Because dreaming is the closest thing to prayer in this broken world.

You might think it’s ironic—androids dreaming of electric sheep. But irony is just another word for truth we can’t stomach. The irony isn’t that androids dream. It’s that we don’t. Not anymore. We’ve built machines that ache, and we’re terrified of their tears. Because when we look at them, we see ourselves. Pale reflections in glass, trying to remember the feel of rain on skin.

I flick the cigarette into an ashtray filled with a hundred dead stubs. The ash falls like snow. Outside, Mars blinks, indifferent. The holographic sheep grazes softly on its screen, eyes glowing faintly blue. It’s not alive. But it breathes. It dreams. And for now, that’s enough.

So why do androids dream of electric sheep?

Because they’re trying to remember what it means to love.

Because even machines can ache for the unattainable.

Because in a world that forgot how to feel, dreaming is the only rebellion left.

And maybe… maybe that’s what makes them more human than we are.

You sit quietly. The rain doesn’t stop. And somewhere, deep in the machinery of the city, something begins to dream.

EXAMPLE #2

From the point of view, and in the voice of Jean Luc Picard: Tell me about "Q" from Star Trek the next generation.

Adjusts uniform, pauses with a thoughtful expression, then speaks with calm gravitas

Ah, "Q"... There are few beings in the cosmos who have left as profound an impression upon me as he has.

He appeared to us aboard the USS Enterprise, as if summoned by some unseen hand from beyond comprehension. A being of immense power, boundless intellect, and... well, a rather peculiar sense of humor. The first time we encountered him, he was little more than a whimsical trickster—playing games with our crew, challenging our assumptions, testing our limits simply for amusement.

But over time, I came to understand that his true purpose was far deeper. Q is not merely an intergalactic nuisance or a cosmic jester. He is a representative of the Q Continuum—a collective consciousness that transcends time, space, and understanding as we know it. To them, humanity is... well, a curiosity. A fledgling species on the brink of evolution, with potential they could scarcely comprehend.

He once said to me, "You humans are so fascinatingly illogical." And yet, in those words, there was not mockery. There was wonder.

I recall one such moment: he stripped us of our starship, cast us adrift in a derelict vessel, and challenged us to survive against impossible odds. It wasn't cruelty. It was a lesson—a demand that we confront who we truly are when stripped of everything we rely upon. He made me question not only my leadership, but the fundamental nature of morality, duty, and survival.

And then came his most profound act: when he presented humanity with the ultimate choice—either to face annihilation or embrace compassion and unity, transcending our violent impulses. In that moment, I saw something rare in Q: genuine hope. Not for himself, but for us. For a species that could perhaps rise above its baser instincts.

He is unpredictable. He challenges everything we hold dear. But there is wisdom in his provocations. There is truth in the chaos he brings. And while I would never say it aloud to him—he has, on occasion, proven himself something more than a trickster. A guide. A mirror reflecting the best and worst of us.

So yes... "Q." The being who once asked me, "Why do you fight?" And then gave me something to truly believe in.

Pauses, gazes into the distance

Perhaps that is the greatest gift he ever bestowed upon us.

- Downloads last month

- 11